Going back a few years, all pen-tests were done manually, there are now a growing number of tools that are automatic pen-test tools. In this blog we will discuss the relevant merits of each, and also briefly compare the tools to a vulnerability scanning solution.

Penetration Testing or pen-tests are targeted cyber-attack prevention. In simple terms, a Pen Testing consultant will think like a malicious actor and use their skill to try and gain access to your systems, documenting their findings along the way.

What is the attack surface?

When undertaking penetration testing it is important to understand what is being tested, as the functionality of each tool varies depending on the environment. To deal with this, I consider testing the following different aspects of security:

Discussed in this blog:

- Infrastructure / network

- Web Applications

- Phishing / Social Engineering

- Wi-Fi

Not discussed here:

- Physical Security

- Mobile Device

Use our Cyber Security Maturity Assessment Model to assess your current security posture, attack surface, and existing plans and solutions. In simple terms, where does your security strategy stand? What are your biggest risks? What are your regulatory and compliance obligations? Where should you focus your efforts? What are your aspirations?

Infrastructure Testing

This is probably the area where there are the most tools available, and also the area where organisations struggle to remain secure, so the biggest opportunity for an adversary. This also makes it a great place to focus pen-testing.

If you are not familiar with some of the types of exploits a tester may use, the following explains them on more detail:

Remote Code Execution (RCE)

This is what every hacker loves to initially look for. RCE provides the ability for an attacker to run their code on your system. Sometimes the user of that system may need to hit a button to accept something, but others, like with Eternal Blue (the issue behind WannaCry), the user is completely unaware of what is going on, and the attacker’s code just runs.

Much as this is desirable from a testing perspective, stand back and think about that in a corporate environment. A live system, is being forced to run some code that has never been tested in that configuration before.

Add to that, some exploits don’t work every time, and the side effect can be a “hung” service or machine.

So very powerful technology that an attacker may use because they don’t care, but a pen-tester has to use with extreme caution.

Privilege Escalation

Here an attacker’s code is running as a normal user (say from the RCE above), but the attacker wants to become a privileged user, so that they can perform advanced tasks such as deleting backups, or deleting log files to cover their tracks.

To achieve this the attacker runs even more code, to exploit further vulnerabilities, to get privileged access.

Recently Microsoft had issues with their domain security and an exploit called ZeroLogon was developed, that allows someone to get domain admin permission. This worked but had significant impact on the domain.

Again, an attacker may use this and not care about the side effects, but a responsible pen-tester has to use caution.

Identity Attacks

Simply put, guessing passwords based on defaults, dictionary attacks, or just brute force. The brute force may be just trying lots of passwords, or it may be taking a password hash, and reversing it into a password that gives the same hash (potentially with rainbow tables).

If you have a system that doesn’t limit password tries, and you have a valid user name, then it is only a matter of time until password guessing will succeed. There are many tools out there that will do this for you automatically.

When attacks occur, often a walk-through of what the attacker did is published, so that others can learn. In many of these walk-throughs, an internal IT team shares a user name and password for some system or another, a team member then leaves, and that user name and password are released to the dark web. This identity is then used for an attack.

Other attacks

There are many other attacks and variants of the above which we could go into, but the above are the cornerstones of most attacks, have the greatest impact and are most frequently used.

Manual Testing

A tester will survey their environment and work with the local teams to agree on a test plan. Low-risk exploits can be run at any time, high risk exploits are run just after backups when the staff are ready to correct any hang or system crash.

Over time a pen-tester will know what exploits they can trust, which have un-intended side effects and where caution should be used.

Exploits are also hard to write, a tool like Core Impact (where the vendor writes, documents, and tests all of their own exploits) has around 4,500 exploits that have been built up over 20 years. The Metasploit framework has a few more than Core, but they are generated by the community so the quality and reliability vary significantly.

The tester will modify their plan based on the findings collected as the test proceeds. Not all tests will be based on exploits, they will also be based on miss-configurations or combinations of both.

How a miss-configuration causes problems

Consider a web application that allows files to be up-loaded (reasonably common).

Then consider that this application is miss-configured and has that up-load folder as executable.

Now and attacker can up-load a file called “attack.php,” then navigating to it from a browser. As the folder is executable the server will run “attack.php”, which will run the code within the file provided by the attacker.

Whilst not an exploit, it is a miss-configuration. This is something that you would expect to find as a result from a pen-test.

Automatic Testing

With automatic tools you typically pick an attacker profile then let the system run. Some profiles will only run the “safe” exploits, others will run the potentially dangerous exploits too.

The number of and source of the exploits for the tool also needs to be looked at closely.

You need a large number of well tested exploits:

- The smaller the number of exploits available, the less value it has.

- If the tests are just “community” exploits, then they are potentially very dangerous.

The tool will do what it is told. It will not make decisions on the fly. Many in the industry talk about “using AI”, but from what we have seen so far, this is not making a big impact just yet.

An interesting thought, exploits are like weapons (and exploit frameworks are regulated like them by the US government) so giving weapons to a good AI would in itself be potentially dangerous!!

Our Thoughts

You have to ask yourself:

“Why you are performing a pen-test?”

Pen-tests are dangerous because they can have unintended impacts on your live environments, even when done by an experienced tester.

If your regulator says that you have to run a pen-test (say PCI-DSS) then fair enough, otherwise organisations typically use them to test for things that they have not already found.

A vulnerability scanner will do a great job of finding issues within your environment. They are easy to use, safe in a live environment, use little human resource and reasonably priced. This is where people should start. By way of metrics, Tenable has 110,000 plugins (which roughly equates to vulnerabilities that it looks for), Core Impact has 4,500 exploits. See our blog on “pen-test vs vulnerability scan” for more discussion.

An automatic pen-test tool will do little more than tell you a subset of what the vulnerability scanner will.

There may also be a danger with automatic pen-test tools, they may attempt an exploit and it fails because some end-point software prevents it. The same end-point software may not behave the same exploit, if the same vulnerability were exploited in a different way, say be a real attacker. This gives a false negative.

We have also not seen automatic tools with large databases or well tested exploits.

A manual pen-test should find things that a vulnerability scanner would not.

Our advice is to have vulnerability scanning as part of a Risk Based Vulnerability Management program. Then use pen-testing to find additional issues, not found by the scanner, that feed into the program.

Read our blog: Risk Based Vulnerability Management for Enterprise Organisations.

Web Application Testing

Here the tester is looking for things like SQL Injection or Cross Site Scripting issues. This is discussed best by OWASP so I will not dwell further here.

Manual Testing

The manual pen-testing tools are very powerful and allow things like exfiltrating data from databases, installing key-loggers into browsers and lots of other interesting things.

With infrastructure / network testing (above) it is common to string a number of attacks together for example:

- Get some code to run

- Escalate privilege

- Pivot to another machines

And a human usually decides what is the best next step.

With web application testing tools, this sort of linked testing is far rarer. Organisations seem more interested in just finding and fixing the issues. The pen-tester therefore focuses on just finding the issues, not exploiting them.

Automatic Testing

There are a number of DAST tools that claim to do an automatic pen-test, but are really just scanning and verifying that an issue exists. A DAST tool may find a SQL injection vulnerability and run the SQL command "SELECT @@VERSION;" to verify that it can be exploited. That command just returns the system version so is safe.

In a manual test, the tester could use any SQL statement that they wanted, to exfiltrate an entire table if they wanted. Whilst interesting, and something that an attacker may do, the first statement is all that is needed to prove that the vulnerability exists.

Read our blog: What’s the difference between DAST vs SAST vs IAST?

Our Thoughts

There are two main use cases for security testing of web applications so the usage varies.

In-house Built Web Application

If the web application (or web site) is built by an internal team, then testing of the application really needs to be pushed back into the development cycle. Insecure code should never be put live.

This is easy to write, and in reality, will take a year or so of organisation change to solve, so a transition makes sense.

The DAST tools (and IAST / SAST tools for that matter) do a great job but they don’t check configuration issues. We therefore recommend embedding the scanning technologies into the development lifecycle, then pen-testing the live site for configuration problems.

Purchased Web Applications

When you purchase a web application, you would hope that it is free of security issues; this is rarely the case. Here we recommend scanning with DAST tools, then feeding that information into a Risk Base Vulnerability Management program to track and manage the patching & remediation.

This should be supplemented by occasional manual pen-tests to check for configuration issues, weak passwords and so on.

Phishing / Social Engineering

This is one of the hardest attacks to protect against, and is how most of the ransomware attacks start.

If a staff member receives an email, apparently from their boss titled “As discussed”, with an Excel attachment it will almost certainly get opened. The question is what damage will it do, and how easy is it to spot that it isn’t really from the boss.

There are two elements that need to be checked here:

- The IT infrastructure should make it easy for a user to do the right thing. Potentially blocking an email, quarantining and attachment, noticing links to malicious sites and so on.

- Users should behave in certain ways to protect the company.

A pen-tester can test both of these. General phishing attacks are easy to simulate; a “spear phishing” attack requires more research and effort.

Phishing vs Spear Phishing

We have all had the email from HSBC about problems with our account, or from DHL about an important parcel that needs to be delivered. These emails are all about getting the user to click on a link, open an attachment or do something else that will give an attacker a foot hold. This is what we call Phishing, and we are all familiar with spotting them, so they don’t work very well.

Now imagine an email, supposedly from the head of sales to a member of the sales team called “New commission plan”, or from the CISO to the pen-testing team called “Corporate migration from Windows to Linux”. These emails have the same objective (get the user to take some action) but because they are targeted, look like they come from our boss, our defences are a little lower. Welcome to Spear Phishing. Takes human resource to research it, but much, much more effective.

These tests are always a mixture of manual and automatic. A completely manual or completely automatic solution doesn’t make sense.

Wi-Fi

These attacks need special hardware to perform (see the Hak5 Pineapple or similar) and by definition need to be local to the attack site; within Wi-Fi range. They, therefore, are always a mixture of manual and automatic.

A tester may also walk into a building reception, get the guest Wi-Fi key, and then check that there are no bridges between the guest Wi-Fi and the corporate network.

Closing Thoughts

For Infrastructure & Web Application

Scanning technologies are easy to use, accurate, safe, and find virtually the same as pen-testing, they should therefore be used first (see Maturity Assessment Model for more details).

Once scanners are in place, you should then prioritise and patch the issues based on risk i.e. have a Risk-Based Vulnerability Assessment process.

Once all of this is done, adding manual pen-test information into the risk model makes perfect sense. This should be done by people because they will think outside of the box.

For Wi-Fi & Social Engineering

These tests need to be driven by a human, with tools to help speed things up.

Next steps

If you want to learn more about the vendors we represent, read more on our vendor page or if you want to book a consultation or request a demo click here.

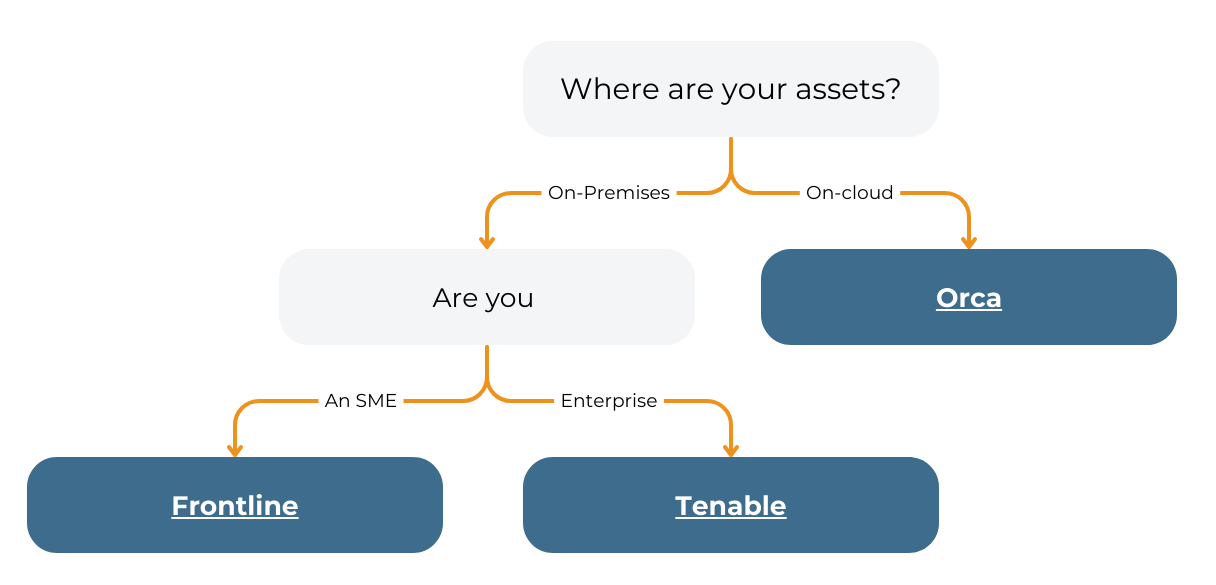

S4 Applications helps organisations protect their assets with vulnerability assessment and remediation solutions whether you are an Enterprise, SME or Security consultant.